RegretsReporter is a community effort to crack YouTube’s recommendation algorithm and figure out why it suggests videos that you don’t want to watch.

Mozilla

Today’s Best Tech Deals

Picked by PCWorld’s Editors

Top Deals On Great Products

Picked by Techconnect’s Editors

You’re casually browsing through YouTube, video after video. Suddenly, you jolt upright. Some content creator you’ve never heard of is trying to tell you the moon landing was faked…or worse. A lot worse. And like you, Mozilla’s RegretsReporter browser plugin wants to know how in the world that happened.

In a way, Mozilla’s new RegretsReporter plugin—available for Mozilla Firefox and Chrome—takes a look back at the ”Elsagate” scandal of 2017: when videos supposedly depicting child-friendly characters were put into bizarre, disturbing situations by creators hoping to game YouTube’s recommendation algorithm. YouTube said later that it had demonetized those types of videos and prevented them from appearing on YouTube Kids.

Still, the problems haven’t gone away, as innocuous searches for “science” videos can lead down a rabbit hole of conspiracy theories that YouTube’s recommendation algorithm blindly suggests. In a scenario like that, you might click and “report” the video to YouTube, but what happens then? RegretsReporter essentially allows you to file a separate, anonymized complaint that researchers can use to parse how and why YouTube is showing you something.

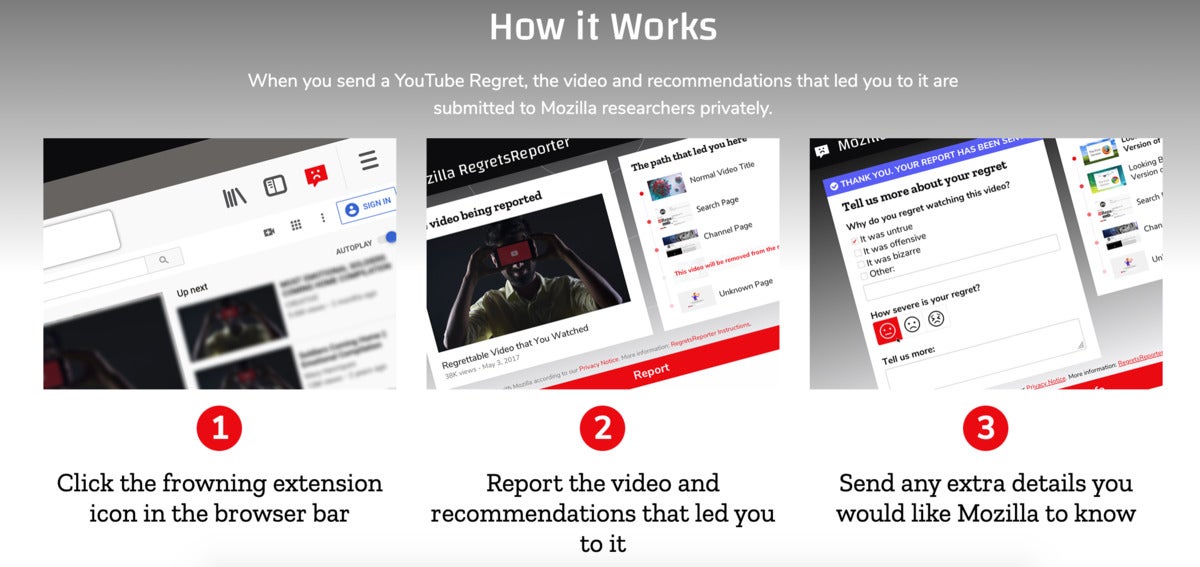

Here’s how it works: After you download the browser extension, RegretsReporter quietly watches in the background, anonymously keeping track of how long you’ve been surfing YouTube. If you end up with a video that you find reportable (Mozilla encourages you to act in good faith) RegretsReporter notes the URL of the video and asks you for the reason you find it reportable.

The extension then supplies a list of the videos you watched beforehand—keeping your identity anonymous—in order to establish an X to Y to Z chain of the videos that slowly led you down Google’s rabbit hole. What it’s trying to establish is what types of videos lead to racist, violent, or conspiratorial content, and if there’s a pattern of videos that eventually lead to those types of results. Mozilla said that it plans to share its research publicly.

Mozilla

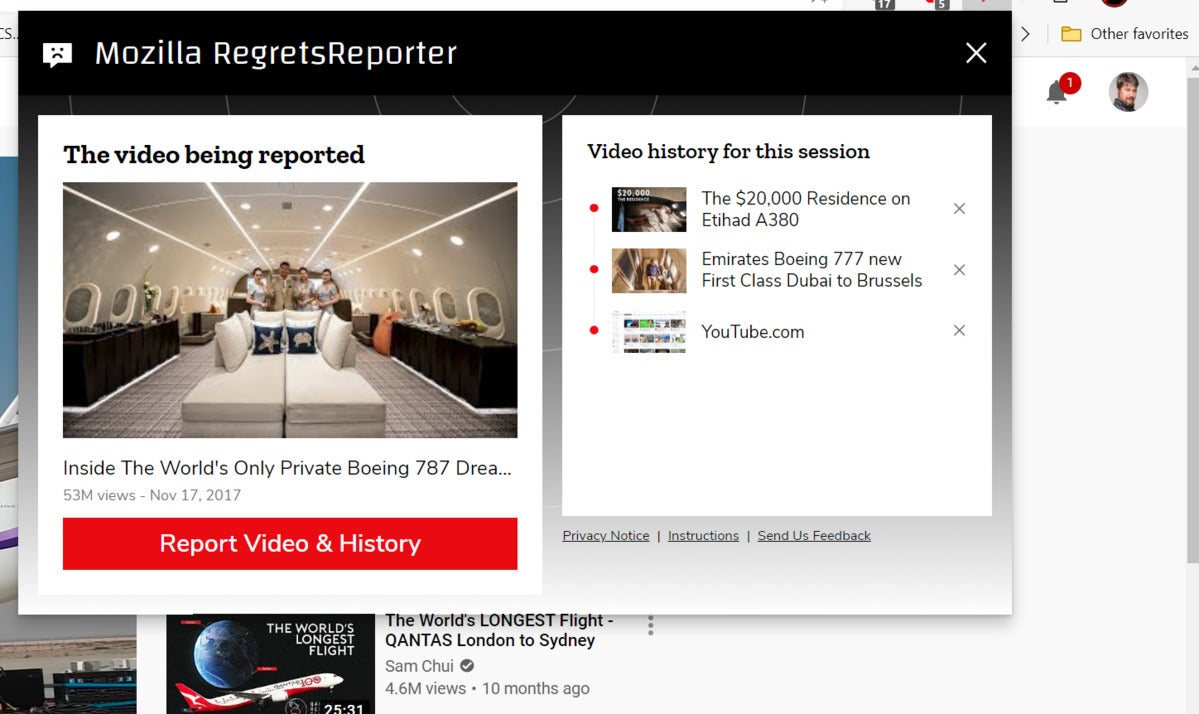

MozillaMozilla’s RegretsReporter browser extension for Firefox and Chrome allows you to report “regrettable” videos to researchers, who will try to crack YouTube’s “black box” algorithm and determine why a video was shown.

Why does YouTube show what it does?

Mozilla’s efforts to examine the AI algorithms of sites like YouTube began in late 2019, when Mozilla’s vice president for advocacy Ashley Boyd said she noticed that her YouTube recommendations began surfacing Korean videos after voluntarily selecting a Korean romantic comedy to watch. Seventy percent of all time spent on YouTube is spent viewing YouTube-recommended content, she said.

“If we think about how our data is collected, offered without our full understanding, and then used to target us with ads and other content—this is actually trying to flip the script a little bit and have people use their own information for part of a collective research project,” Boyd said in an interview.

Mark Hachman / IDG

Mark Hachman / IDGAn example of how a video is reported using Mozilla’s RegretsReporter video. (PCWorld did not report the video, nor did we find it objectionable.)

RegretsReporter’s data will be subject to the same frailties as its human users. Mozilla acknowledged that the concept of “regrettable” varies by person. The tool doesn’t track metadata, so it’s up to researchers to intuit what in the video led YouTube to recommend the next in the chain. Users may decide whether to opt in to allowing RegretsReporter to supply the causal chain of one video to the next, though the data is less useful if users opt out. Still, YouTube’s algorithm is essentially a black box, and Mozilla’s goal is to shed some light on what’s inside, Boyd said.

YouTube is just one site of interest for Mozilla’s researchers. Boyd said the company has already begun asking questions about Twitter trends, for example, and the virality that hashtags and other techniques garner. Mozilla has also established a body of work in examining YouTube, both in its own work as well as the somewhat-related TheirTube, a work by artist Tomo Kihara as part of the Mozilla Creative Awards 2020. TheirTube created six personas—liberals, conservatives, climate-change deniers and more—in an attempt to show what videos YouTube would recommend.

PCWorld used similar techniques to examine what “fake news” was being pushed to Clinton and Trump supporters on Facebook, arguably an even more profound influencer of public opinion than YouTube is.

“We’re definitely interested in going more broadly,” Boyd said. “One of the reasons why we need a ‘reporter’ [plugin], and not just use YouTube as a reporter, is because we hope to use tools like this to do exactly that kind of investigation on other platforms.”

Note: When you purchase something after clicking links in our articles, we may earn a small commission. Read our affiliate link policy for more details.

As PCWorld’s senior editor, Mark focuses on Microsoft news and chip technology, among other beats.