contact tracing —

Nearly 500 COVID-19-related apps worldwide were analyzed.

Andy Greenberg, wired.com

–

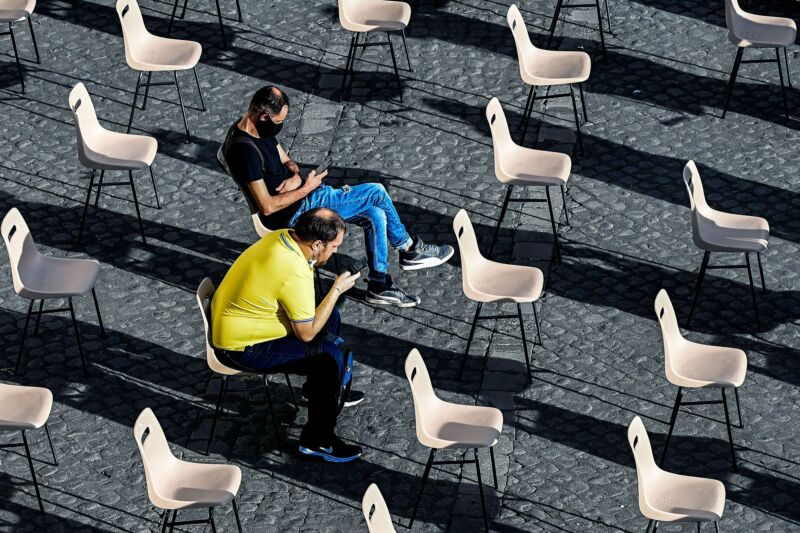

Enlarge / Around 44 percent of COVID-19 apps on iOS ask for access to the phone’s camera. 32 percent asked for access to photos.

When the notion of enlisting smartphones to help fight the COVID-19 pandemic first surfaced last spring, it sparked a months-long debate: should apps collect location data, which could help with contact tracing but potentially reveal sensitive information? Or should they take a more limited approach, only measuring Bluetooth-based proximity to other phones? Now, a broad survey of hundreds of COVID-19-related apps reveals that the answer is all of the above. And that has made the COVID-19 app ecosystem a kind of wild, sprawling landscape, full of potential privacy pitfalls.

Late last month, Jonathan Albright, director of the Digital Forensics Initiative at the Tow Center for Digital Journalism, released the results of his analysis of 493 COVID-19-related iOS apps across dozens of countries. His study of those apps, which tackle everything from symptom-tracking to telehealth consultations to contact tracing, catalogs the data permissions each one requests. At WIRED’s request, Albright then broke down the dataset further to focus specifically on the 359 apps that handle contact tracing, exposure notification, screening, reporting, workplace monitoring, and COVID-19 information from public health authorities around the globe.

The results show that only 47 of that subset of 359 apps use Google and Apple’s more privacy-friendly exposure-notification system, which restricts apps to only Bluetooth data collection. More than six out of seven COVID-19-focused iOS apps worldwide are free to request whatever privacy permissions they want, with 59 percent asking for a user’s location when in use and 43 percent tracking location at all times. Albright found that 44 percent of COVID-19 apps on iOS asked for access to the phone’s camera, 22 percent of apps asked for access to the user’s microphone, 32 percent asked for access to their photos, and 11 percent asked for access to their contacts.

“It’s hard to justify why a lot of these apps would need your constant location, your microphone, your photo library,” Albright says. He warns that, even for COVID-19-tracking apps built by universities or government agencies—often at the local level—that introduces the risk that private data, sometimes linked with health information, could end up out of users’ control. “We have a bunch of different, smaller public entities that are more or less developing their own apps, sometimes with third parties. And we don’t know where the data’s going.”

The relatively low number of apps that use Google and Apple’s exposure-notification API compared to the total number of COVID-19 apps shouldn’t be seen as a failure of the companies’ system, Albright points out. While some public health authorities have argued that collecting location data is necessary for contact tracing, Apple and Google have made clear that their protocol is intended for the specific purpose of “exposure notification”—alerting users directly to their exposure to other users who have tested positive for COVID-19. That excludes the contact tracing, symptom checking, telemedicine, and COVID-19 information and news that other apps offer. The two tech companies have also restricted access to their system to public health authorities, which has limited its adoption by design.

“Almost as bad as what you’d expect”

But Albright’s data nonetheless shows that many US states, local governments, workplaces, and universities have opted to build their own systems for COVID-19 tracking, screening, reporting, exposure alerts, and quarantine monitoring, perhaps in part due to Apple and Google’s narrow focus and data restrictions. Of the 18 exposure-alert apps that Albright counted in the United States, 11 use Google and Apple’s Bluetooth system. Two of the others are based on a system called PathCheck Safeplaces, which collects GPS information but promises to anonymize users’ location data. Others, like Citizen Safepass and the CombatCOVID app used in Florida’s Miami-Dade and Palm Beach counties, ask for access to users’ location and Bluetooth proximity information without using Google and Apple’s privacy-restricted system. (The two Florida apps asked for permission to track the user’s location in the app itself, strangely, not in an iOS prompt.)

But those 18 exposure-notification apps were just part of a larger category of 45 apps that Albright classified as “screening and reporting” apps, whose functions range from contact tracing to symptom logging to risk assessment. Of those apps, 24 asked for location while the app was in use, and 20 asked for location at all times. Another 19 asked for access to the phone’s camera, 10 asked for microphone access, and nine asked for access to the phone’s photo library. One symptom-logging tool called CovidNavigator inexplicably asked for users’ Apple Music data. Albright also examined another 38 “workplace monitoring” apps designed to help keep COVID-19-positive employees quarantined from coworkers. Half of them asked for location data when in use, and 13 asked for location data at all times. Only one used Google and Apple’s API.

“In terms of permissions and in terms of the tracking built in, some of these apps seem to be almost as bad as what you’d expect from a Middle Eastern country,” Albright says.

493 apps

Albright assembled his survey of 493 COVID-19-related apps with data from apps analytics firms 41matters, AppFigures, and AppAnnie, as well as by running the apps himself while using a proxied connection to monitor their network communications. In some cases, he sought out public information from app developers about functionality. (He says he restricted his study to iOS rather than Android because there have been previous studies that focused exclusively on Android and raised similar privacy concerns, albeit while surveying far fewer apps.) Overall, he says the results of his survey don’t point to any necessarily nefarious activity, so much as a sprawling COVID-19 app marketplace where private data flows in unexpected and less than transparent directions. In many cases, users have little choice but to use the COVID-19 screening app that’s implemented by their college or workplace and no alternative to whatever app their state’s health authorities ask users to adopt.

When WIRED reached out to Apple for comment, the company responded in a statement that it carefully vets all iOS apps related to COVID-19—including those that don’t use its exposure-notification API—to make sure they’re being developed by reputable organizations like government agencies, health NGOs, and companies credentialed in health issues or medical and educational institutions as well as to ensure they’re not deceptive in their requests for data. In iOS 14, Apple notes that users are warned with an indicator dot at the top of their screen when an app is accessing their microphone or camera and lets users choose to share approximate rather than fine-grained locations with apps.

But Albright notes that some COVID-19 apps he analyzed went beyond direct requests for permission to monitor the user’s location to include advertising analytics, too: while Albright didn’t find any advertising-focused analytic tools built into exposure-notification or contact-tracing apps, he found that, among apps he classifies as “information and updates,” three used Google’s ad network and two used Facebook Audience Network, and many others integrated software development kits for analytics tools including Branch, Adobe Auditude, and Airship. Albright warns that any of those tracking tools could potentially reveal users’ personal information to third-party advertisers, including potentially even users’ COVID-19 status. (Apple noted in its statement that starting this year, developers will be required to provide information about both their own privacy practices and those of any third parties whose code they integrate into their apps to be accepted into the app store.)

“Collect data and then monetize it”

Given the rush to create COVID-19-related apps, it’s not surprising that many are aggressively collecting personal data and, in some cases, seeking to profit from it, says Ashkan Soltani, a privacy researcher and former Federal Trade Commission chief technologist. “The name of the game in the apps space is to collect data and then monetize it,” Soltani says. “And there is essentially an opportunity in the marketplace because there’s so much demand for these types of tools. People have COVID-19 on the brain and therefore developers are going to fill that niche.”

Soltani adds that Google and Apple, by allowing only official public health authorities to build apps that access their exposure-notification API, built a system that drove other developers to build less restricted, less privacy-preserving COVID-19 apps. “I can’t go and build an exposure-notification app that uses Google and Apple’s system without some consultation with public health agencies,” Soltani says. “But I can build my own random app without any oversight other than the App Store’s approval.”

Concerns of data misuse apply to official channels as well. Just in recent weeks, the British government has said it will allow police to access contact-tracing information and in some cases issue fines to people who don’t self-isolate. And after a public backlash, the Israeli government walked back a plan to share contact-tracing information with law enforcement so it could be used in criminal investigations.

Not necessarily nefarious

Apps that ask for location data and collect it in a centralized way don’t necessarily have shady intentions. In many cases, knowing at least elements of an infected person’s location history is essential to effective contact tracing, says Mike Reid, an infectious disease specialist at UCSF, who is also leading San Francisco’s contact-tracing efforts. Google and Apple’s system, by contrast, prioritizes the privacy of the user but doesn’t share any data with health agencies. “You’re leaving the responsibility entirely to the individual, which makes sense from a privacy point of view,” says Reid. “But from a public health point of view, we’d be completely reliant on the individual calling us up, and it’s unlikely people will do that.”

Reid also notes that, with Bluetooth data alone, you’d have little idea about when or where contacts with an infected person might have occurred—whether the infected person was inside or outside, wearing a mask at the time, or behind a plexiglass barrier, all factors whose importance have become better understood since Google and Apple first announced their exposure-notification protocol.

All that helps explain why so many developers are turning to location data, even with all the privacy risks that location-tracking introduces. And that leaves users to sort through the privacy implications and potential health benefits of an app’s request for location data on their own—or to take the simpler path out of the minefield and just say no.

This story originally appeared on wired.com.